Don’t do RAG: CAG is all you need for knowledge tasks

Understand the distinctions between Retrieval and Cache-Augmented Generation; find out how CAG improves speed while RAG handles real-time information

Search for a command to run...

Articles tagged with #llm

Understand the distinctions between Retrieval and Cache-Augmented Generation; find out how CAG improves speed while RAG handles real-time information

I’m sitting here with a hot cup of coffee, about halfway through Google’s new paper on "Nested Learning," and I have to be honest: I need to get this out of my head and onto the screen. Usually, when a big lab drops a paper, I skim the abstract, nod ...

An open-source text-to-speech model built for long-form, multi-speaker dialogue.

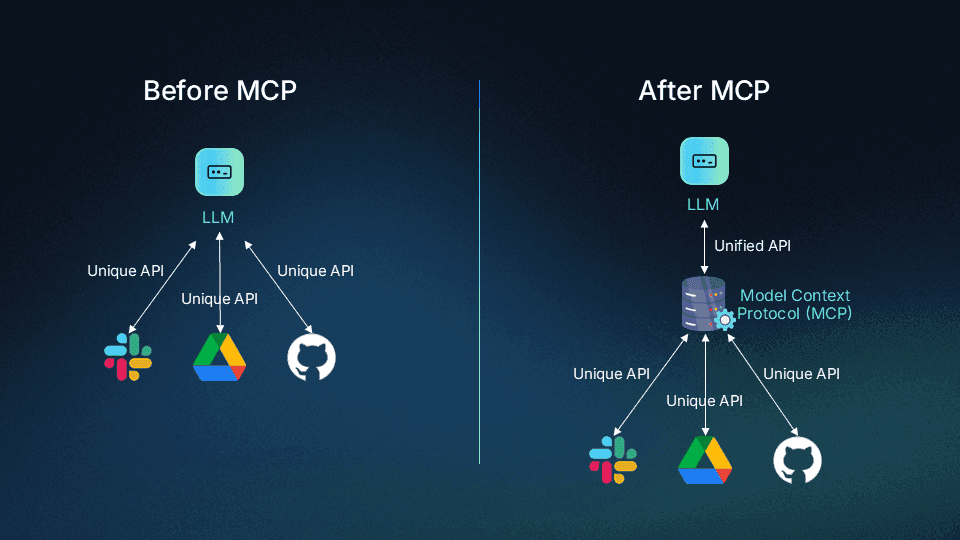

MCP provides a unified and standardized framework for connecting LLM with external data and tools.

When it comes to enhancing the capabilities of large language models (LLMs), two powerful techniques stand out: RAG (Retrieval Augmented Generation) and fine-tuning. Both methods have their strengths and are suited for different use cases, but choosi...

I recently embarked on an exciting research journey to explore the vulnerabilities of large language models (LLMs) like ChatGPT, Anthropic Gemini, and similar models. My goal was to see how hackers could exploit them through prompt injection attacks....